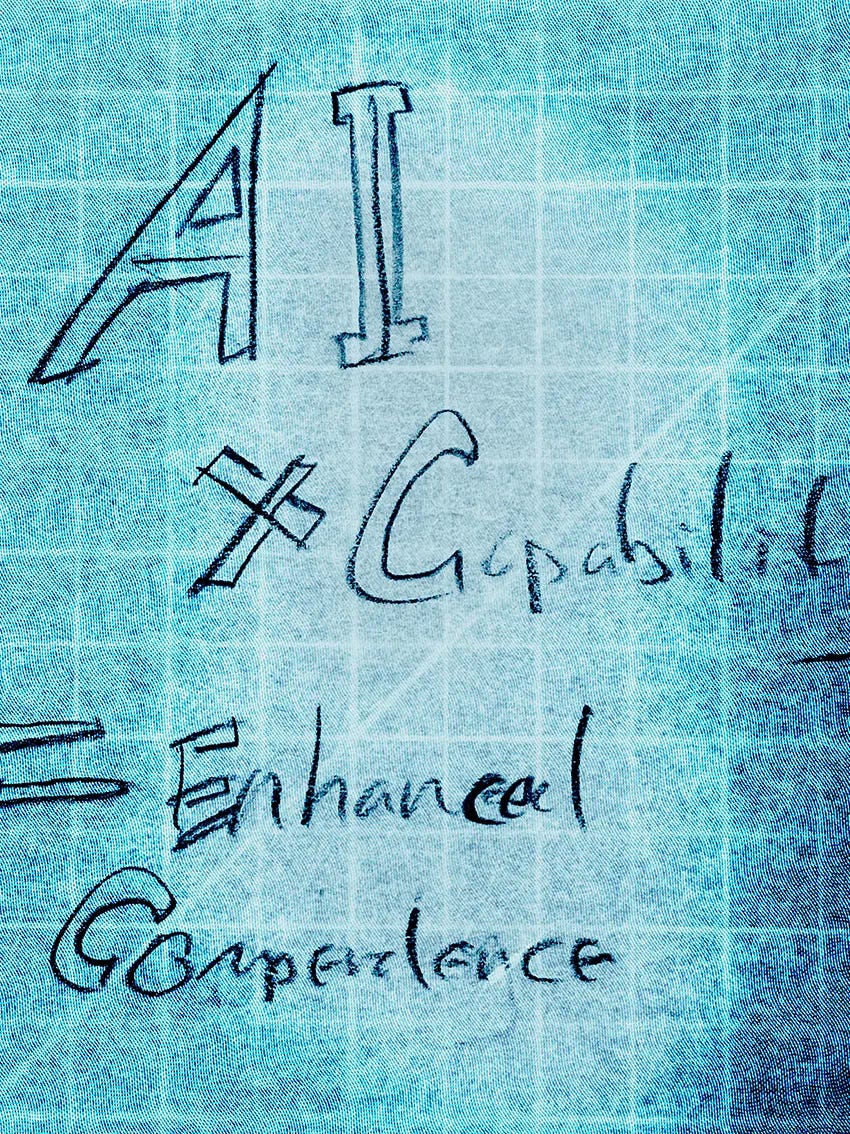

The Equation

AI × capability = enhanced competence. Everyone reads this as a celebration. It's a warning.

AI × Capability = Enhanced Competence.

Everyone agrees with some version of this equation. It gets nodded at in keynotes and repeated in LinkedIn posts about the future of work. It’s the polite version of the AI story, the one where humans still matter, where the tool amplifies what you bring, where collaboration wins. And I suppose it’s true, as far as it goes. But the problem I see is that it doesn’t go far enough. The equation looks like some kind of celebration. Let’s just do this and we win. I think it’s actually a diagnosis, and if you look at it carefully, it’s a warning.

The AI in this equation is not the research frontier or the alignment debate or the question of whether machines will become conscious. It’s the tool on your desk right now: Claude, Cursor, Copilot, Midjourney, the OpenClaw agent that drafts your emails and writes your code. It’s the reason Tuesday’s deliverable looks like what next Thursday’s used to. The amplification is real. A skilled professional with these tools produces work that would have required a team. Nobody seriously disputes this. But, an amplifier scales whatever it’s multiplying. A microphone makes your voice louder. It doesn’t give you something to say. The AI term in this equation is powerful, it’s getting more powerful every quarter, and it is completely dependent on what sits on the other side of the “x” in this equation.

That other side is where the trouble starts.

When people hear the word capability in this equation, they hear a constant, like a fixed attribute, something you have, like height. You went to school. You practiced for twenty years. You have the capability. AI multiplies it. Story over. Let’s go cure cancer. But capability is not a constant. It’s a variable, and a specific kind of variable, it’s a practiced one. This is the distinction that the equation hides: knowledge versus judgment. Knowledge is durable, it’s tough. Decades ago in architecture school I learned how load-bearing walls work, and I’ll know it decades from now. That understanding sits in my head like a fact, because it is one. Judgment is different. Judgment is the fluid, contextual, instinct-level application of knowledge to situations that don’t come with instructions. It’s the knowing what to do when you’re looking at a mess, when the building site doesn’t match the drawings, when the client wants something they can’t articulate and the budget won’t cover what they mean. That kind of capability degrades without exercise. It’s something closer to a jump shot than a phone number. You don’t store it. You maintain it.

Years ago, before I was professional at anything, I was volunteering at a reused materials warehouse on the South Side of Chicago. It was the kind of place that collected anything unwanted or underused, from hoola-hoops to doorframes. I was a sculptor back then and I’d go looking for metal and objects that might spark a new work. One afternoon I met a man named Tyner White. Tyner was homeless. He built things out of whatever he found in the streets and vacant lots around South Chicago: toys, mobile carts, temporary shelters. Every object solved a problem he had actually encountered, in a place he actually lived, for people he actually knew. But they weren’t just functional. A cart designed to fasten to the back of a bicycle had a large windmill incorporated just for fun. A rain shelter wasn’t quite an umbrella but got the job done with a touch of personality. He was making decisions that went beyond solving the problem, decisions about what the solution should feel like. That’s not resourcefulness. That’s judgment.

What struck me was not the objects themselves but what was behind them. Tyner didn’t have formal training. What he had was a deep, practiced, physical relationship with his materials and his context. He knew what worked because he had built and failed and rebuilt in the same conditions, over and over, with his hands. His knowledge and his judgment were inseparable, fused through practice into something you could see in the finished object but couldn’t extract from it as a set of principles. You couldn’t write down what Tyner knew, because what Tyner knew was inseparable from the act of making.

That is what capability looks like before it gets abstracted into a credential. And it’s exactly the kind of capability that can go away when the practice stops.

An architect who stops designing for five years still knows the principles, or most of them. But put them in front of a real project with real ambiguity and something has shifted. They hesitate where they used to move. They reach for rules where they used to reach for instinct. The knowledge is mostly intact but the practiced application of it is not.

Some professions figured this out a long time ago. The ones where the rust shows. A surgeon’s hands steady or they don’t. A pilot lands the plane or they can’t. A musician plays the passage or falls apart. When capability has a performative moment, a physical, visible instant where you either have it or you don’t, institutions notice. They build structures to protect it. Recurrency requirements. Mandatory manual hours. Practice regimens that nobody questions because everybody understands what happens without them.

The professions where the rust doesn’t show have no equivalent structure. No recurrency. No mandatory manual hours. No institutional recognition that professional judgment is a perishable skill. And these are precisely the professions where AI is now creating the same maintenance problem that many professional domains identified decades ago. This problem is happening faster, broader, and with a big difference: the tools don’t just fail to protect against the decay. They actively cover it up. A surgeon who hasn’t operated in two years can’t fake it in the operating room. In most professions, the gap between what the tool produces and what the professional actually contributed won’t surface until something goes wrong. An architectural detail that doesn’t work on site. A question from a contractor that requires judgment, not a reference drawing. A condition that doesn’t match the model. The tool can generate the output. It can’t stand behind it.

Now, inside the multiplication.

When the equation works, it delivers exactly what it promises. A professional with actively maintained judgment uses AI and the output is genuinely enhanced, better than either could produce alone. These decisions originate in expertise. The AI accelerates their execution. This is the good version. It’s real and it’s valuable and it is not the whole story.

The tool that multiplies capability can also quietly erode it. The mechanism isn’t dramatic. AI doesn’t take your judgment away. It offers a path around it, a short cut. Every time you approve a default instead of making a decision, the tool has routed around your judgment. Every time you select from AI-generated options instead of generating options from your own expertise, the tool has done the thinking and you’ve done the choosing. From the outside, the work looks the same. From the inside, something has shifted, from exercising capability to supervising output. And the difference compounds.

The math is particularly tough, because multiplication hides the decline. Let’s say at full capability, the equation produces enhanced competence. At eighty percent, the output is still pretty good. At fifty percent, it passes most quality checks. At ten percent, it’s basically just AI with a human name on it. The decline is gradual, the output stays acceptable the entire way down, and there is no alarm that sounds at any point along the curve. The professional feels productive at every stage. The client sees quality work at every stage. The decay is invisible from every vantage point, until conditions change. And conditions always change.

What happens when a novel problem arrives that doesn’t fit the AI’s training distribution. A tool goes down and the work has to continue without it. A job change demands that you demonstrate what you can do, independent of your tools. A client asks a question that requires judgment, not output, and the answer has to come from you and not from a prompt. These are the moments when the rust shows, and by the time it does, the atrophy is years old.

The cruelty doesn’t stop there. AI has made professional credentials cheap. Anyone can now produce a polished portfolio, a clean resume, a keyword-optimized LinkedIn profile with minimal effort. When output is no longer a reliable signal of what someone can actually do, the only thing left is demonstrated capability: the ability to show judgment in action, to explain why you made the choices you made, to reveal a decision-making process that no tool could replicate. The market is beginning to demand proof of the capability term at precisely the moment that many professionals have quietly let it decay. The signal the market needs is the same thing the equation has been eroding.

So. The equation is a design problem, not a moral one. The question is not whether to use AI. Of course you should, we all need to. The tools are better than what came before them and what comes next will be better still. The question is how to use them in a way that keeps the capability term substantive, how to get the amplification without the atrophy. The answer is not restriction. Telling a working professional to stop using AI is like telling a pilot to abandon autopilot. It misidentifies the threat and ignores the legitimate value of the tool. The tool is not the enemy. The invisible decay is the enemy.

The answer is visibility. If you can see the capability term, if you have some way of knowing whether your professional judgment is being exercised or bypassed during AI-assisted work, then you can make informed choices. You can let the tool lead on routine tasks and bring your full judgment to consequential ones. You can notice when a pattern of engagement has shifted, over weeks or months, from active to passive. You can maintain the variable because you can see it moving.

Capability has a lifecycle. It forms through learning: the uncomfortable, effortful process of building understanding from nothing. And it’s maintained through practice: the ongoing exercise of judgment against real problems with real stakes. Protecting the variable during each phase requires different tools.

But the equation itself is the foundation, and it is simpler than it looks. Everyone is optimizing the left side of the multiplication: better models, better tools, better agents, better prompts. Almost nobody is paying attention to the right side. And the right side is the only part of the equation that is yours. The AI will improve whether you attend to it or not. Your capability won’t. It needs practice the way a muscle needs load, and the most sophisticated tool ever built is quietly offering you reasons to set the weights down.

The most important professional discipline of the next decade is not mastering the AI. It’s protecting the capability that makes the AI worth using.

So, let’s pick up the weights.